AI Agent Tool Use: How Function Calling Makes Agents Actually Useful (2026)

Without tools, an LLM is just a very eloquent parrot. It can generate text, but it can't check the weather, send an email, query a database, or do anything in the real world. Tool use (also called function calling) is what transforms a language model into an agent.

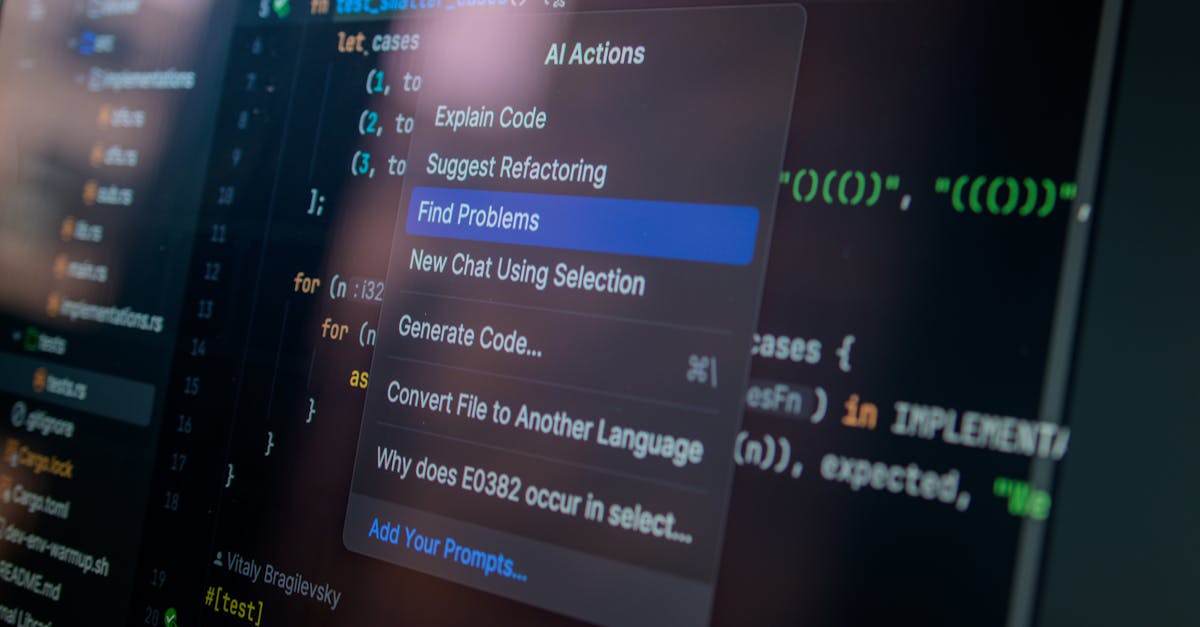

Photo by Daniil Komov on Pexels

This guide covers how tool use works under the hood, how to design great tool schemas, handle errors gracefully, orchestrate multi-tool workflows, and keep everything secure.

How Tool Use Works

The concept is simple: you tell the LLM what tools are available, and it decides when to use them. The LLM doesn't actually execute anything — it outputs a structured request that your code executes.

# The tool use loop

while not done:

# 1. Send message + available tools to LLM

response = llm.call(messages, tools=tool_definitions)

# 2. Check if LLM wants to use a tool

if response.has_tool_call:

tool_name = response.tool_call.name

tool_args = response.tool_call.arguments

# 3. YOUR CODE executes the tool

result = execute_tool(tool_name, tool_args)

# 4. Send result back to LLM

messages.append({"role": "tool", "content": result})

else:

# LLM is done, return final response

return response.textDefining Tools (Schema Design)

Tools are defined as JSON schemas that describe what the tool does, what parameters it accepts, and what it returns.

OpenAI / GPT Format

tools = [

{

"type": "function",

"function": {

"name": "search_products",

"description": "Search the product catalog by keyword, category, or price range. Returns up to 10 matching products.",

"parameters": {

"type": "object",

"properties": {

"query": {

"type": "string",

"description": "Search keywords (e.g., 'wireless headphones')"

},

"category": {

"type": "string",

"enum": ["electronics", "clothing", "home", "sports"],

"description": "Product category to filter by"

},

"max_price": {

"type": "number",

"description": "Maximum price in USD"

},

"in_stock_only": {

"type": "boolean",

"description": "Only return products currently in stock",

"default": true

}

},

"required": ["query"]

}

}

}

]Anthropic / Claude Format

tools = [

{

"name": "search_products",

"description": "Search the product catalog. Returns up to 10 matching products with name, price, and availability.",

"input_schema": {

"type": "object",

"properties": {

"query": {

"type": "string",

"description": "Search keywords"

},

"category": {

"type": "string",

"enum": ["electronics", "clothing", "home", "sports"]

},

"max_price": {"type": "number"},

"in_stock_only": {"type": "boolean", "default": true}

},

"required": ["query"]

}

}

]Schema Design Best Practices

- Descriptive names:

search_productsnotsp. The LLM reads the name to decide when to use the tool. - Rich descriptions: Include what the tool does, what it returns, and when to use it. The description is your prompt to the LLM about the tool.

- Typed parameters: Use specific types (

number,boolean,enum) instead of freeform strings where possible. - Minimal required params: Only mark truly required parameters. Defaults for optional ones reduce LLM cognitive load.

- Return format hints: Mention in the description what the output looks like so the LLM knows what to expect.

Common Tool Categories

| Category | Examples | Complexity |

|---|---|---|

| Read-only | Search, lookup, get status, fetch data | Low (safe) |

| Write | Create record, send email, post message | Medium (reversible) |

| Destructive | Delete, overwrite, cancel subscription | High (irreversible) |

| Computational | Calculate, convert, analyze data | Low (deterministic) |

| External API | Weather, stock prices, map directions | Medium (latency, rate limits) |

| Code execution | Run Python, SQL query, shell command | High (security critical) |

Error Handling

Tools fail. APIs return errors, databases time out, inputs are invalid. How you report errors to the LLM determines whether it recovers gracefully or spirals.

def execute_tool(name, args):

try:

if name == "search_products":

results = product_db.search(**args)

return json.dumps({"status": "success", "results": results})

elif name == "send_email":

send_email(**args)

return json.dumps({"status": "success", "message": "Email sent"})

except ValidationError as e:

# Tell the LLM what was wrong so it can fix the input

return json.dumps({

"status": "error",

"error": f"Invalid input: {e}",

"hint": "Check parameter types and required fields"

})

except RateLimitError:

return json.dumps({

"status": "error",

"error": "Rate limited. Try again in 30 seconds.",

"retry_after": 30

})

except Exception as e:

# Generic error — still give the LLM useful info

return json.dumps({

"status": "error",

"error": str(e),

"hint": "This tool is temporarily unavailable"

})Multi-Tool Orchestration

Real-world agents often need multiple tools in sequence. The LLM naturally chains tool calls when it understands the available tools.

# Example: "Book a meeting with John next Tuesday"

# The agent will:

# Step 1: Look up John's contact info

→ tool_call: search_contacts(query="John")

← result: {"name": "John Smith", "email": "[email protected]"}

# Step 2: Check John's calendar

→ tool_call: check_availability(email="[email protected]", date="2026-04-01")

← result: {"available_slots": ["10:00", "14:00", "16:00"]}

# Step 3: Check your calendar

→ tool_call: check_availability(email="[email protected]", date="2026-04-01")

← result: {"available_slots": ["09:00", "10:00", "11:00", "14:00"]}

# Step 4: Find overlapping slot and book

→ tool_call: create_meeting(

title="Meeting with John Smith",

attendees=["[email protected]", "[email protected]"],

date="2026-04-01",

time="10:00",

duration=30

)

← result: {"status": "booked", "meeting_id": "mtg_123"}Parallel Tool Calls

Modern APIs (OpenAI, Anthropic) support parallel tool calls — the LLM can request multiple tools at once when there are no dependencies:

# LLM response with parallel tool calls:

{

"tool_calls": [

{"name": "get_weather", "args": {"city": "Paris"}},

{"name": "get_weather", "args": {"city": "London"}},

{"name": "get_exchange_rate", "args": {"from": "EUR", "to": "GBP"}}

]

}

# Execute all three in parallel

import asyncio

async def execute_parallel(tool_calls):

tasks = [execute_tool(tc["name"], tc["args"]) for tc in tool_calls]

return await asyncio.gather(*tasks)Security Considerations

1. Never Trust LLM-Generated Arguments Blindly

# BAD: Direct execution of LLM output

def execute_sql(query):

return db.execute(query) # SQL injection!

# GOOD: Parameterized queries with validation

def search_orders(customer_id, status=None):

if not isinstance(customer_id, int):

raise ValidationError("customer_id must be integer")

query = "SELECT * FROM orders WHERE customer_id = %s"

params = [customer_id]

if status in ["pending", "shipped", "delivered"]:

query += " AND status = %s"

params.append(status)

return db.execute(query, params)2. Permission Levels

TOOL_PERMISSIONS = {

"search_products": "read", # Always allowed

"get_order_status": "read", # Always allowed

"send_email": "write", # Requires confirmation

"delete_account": "destructive", # Requires human approval

"run_code": "dangerous", # Sandboxed only

}

def execute_with_permissions(tool_name, args, user_approved=False):

level = TOOL_PERMISSIONS.get(tool_name, "dangerous")

if level == "read":

return execute_tool(tool_name, args)

elif level == "write" and user_approved:

return execute_tool(tool_name, args)

elif level == "destructive":

return {"status": "pending_approval",

"message": f"Action '{tool_name}' requires human approval"}

else:

return {"status": "blocked",

"message": f"Tool '{tool_name}' not allowed"}3. Rate Limiting

# Prevent runaway agents from making 1000 API calls

class ToolRateLimiter:

def __init__(self, max_calls_per_minute=20, max_total=100):

self.calls = []

self.max_per_minute = max_calls_per_minute

self.max_total = max_total

def check(self):

now = time.time()

self.calls = [t for t in self.calls if now - t < 60]

if len(self.calls) >= self.max_per_minute:

raise RateLimitError("Too many tool calls per minute")

if len(self.calls) >= self.max_total:

raise RateLimitError("Maximum tool calls exceeded")

self.calls.append(now)Tool Use Across Providers

| Feature | OpenAI | Anthropic | DeepSeek |

|---|---|---|---|

| Function calling | Yes (all models) | Yes (all Claude models) | Yes (V3+) |

| Parallel tool calls | Yes | Yes | Yes |

| Streaming tool calls | Yes | Yes | Yes |

| Tool choice control | auto/required/none/specific | auto/any/tool | auto/required |

| Max tools per request | 128 | No hard limit | 64 |

| Schema format | JSON Schema | JSON Schema | JSON Schema |

MCP: The Universal Tool Protocol

MCP (Model Context Protocol) is Anthropic's open standard for connecting AI agents to external tools and data sources. Instead of building custom integrations for each tool, MCP provides a universal interface.

# MCP server exposes tools via a standard protocol

# Your agent connects to MCP servers to discover and use tools

# Example: connecting to a GitHub MCP server

{

"mcpServers": {

"github": {

"command": "npx",

"args": ["@modelcontextprotocol/server-github"],

"env": {"GITHUB_TOKEN": "ghp_..."}

}

}

}

# The agent automatically discovers available tools:

# - github_create_issue

# - github_search_repos

# - github_create_pull_request

# ...and uses them like any other toolMCP is especially powerful because:

- Tool definitions are standardized across providers

- Servers can be reused across different agent frameworks

- Auth, rate limiting, and caching are handled at the server level

- Growing ecosystem: 100+ MCP servers for popular services

Key Takeaways

- Tools = actions. They're what make an LLM an agent instead of a chatbot. Without tools, it can only talk.

- Schema design matters. Clear names, rich descriptions, typed parameters. The schema is your prompt to the LLM about each tool.

- The LLM never executes. It requests; your code executes. This separation is your security boundary.

- Return structured errors. The LLM needs to understand what went wrong to recover. "Error 500" is useless; "Rate limited, retry in 30s" is actionable.

- Permission levels are essential. Read-only tools can run freely. Write tools need confirmation. Destructive tools need human approval.

- MCP is the future of tool integration. Universal protocol, growing ecosystem, one integration works everywhere.

Build Agents That Act

Our AI Agent Playbook includes tool schema templates, permission frameworks, and MCP integration guides.

Get the Playbook — $19Stay Updated on AI Agents

Tool use patterns, MCP updates, and agent frameworks. 3x/week, no spam.

Subscribe to AI Agents WeeklyRelated Articles

Not ready to buy? Start with Chapter 1 — free

Get the first chapter of The AI Agent Playbook delivered to your inbox. Learn what AI agents really are and see real production examples.

Get Free Chapter →