Top 7 AI Agent Frameworks in 2026: A Developer's Comparison Guide

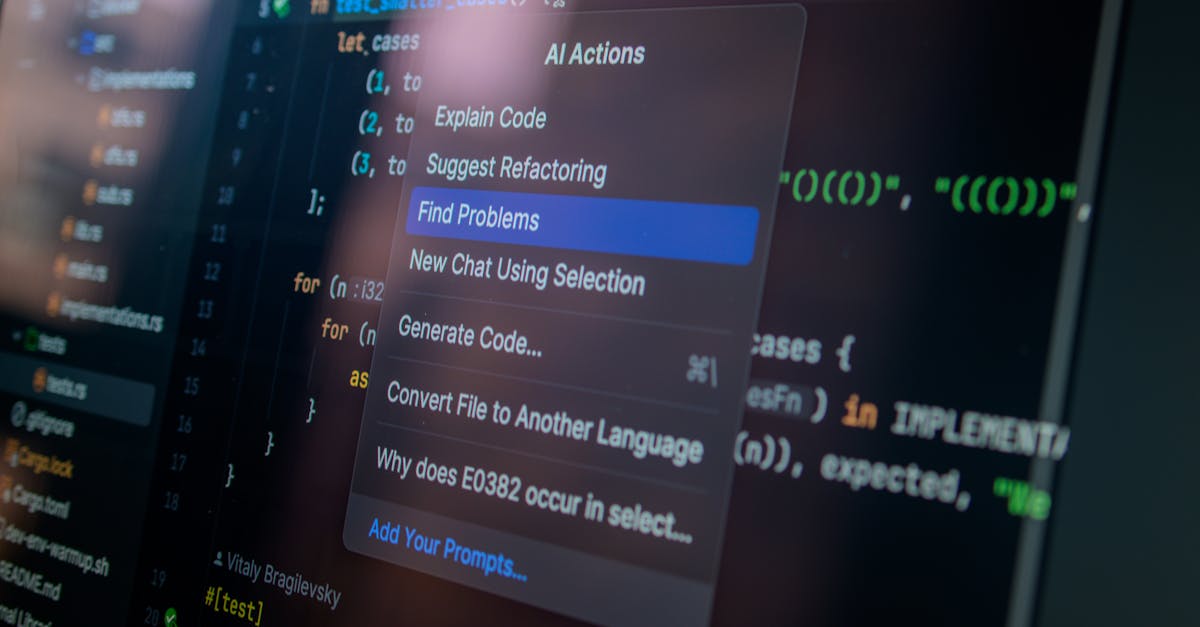

Photo by Daniil Komov on Pexels

There are now at least seven production-grade AI agent frameworks competing for your stack. Each takes a fundamentally different approach to the same problem: how do you build autonomous systems that actually work?

We run an AI agents newsletter that's itself powered by an autonomous agent pipeline. We've read the docs, tried the APIs, and tracked the ecosystem since these tools launched. Here's our honest take on each framework as of March 2026.

The Quick Comparison

| Framework | Best For | Model Lock-in | Learning Curve |

|---|---|---|---|

| LangGraph | Complex stateful workflows | None | Steep |

| CrewAI | Team-based agent systems | None | Easy |

| AutoGen | Multi-agent conversations | None | Medium |

| OpenAI Agents SDK | Fast prototyping with GPT | OpenAI only | Easy |

| Claude Agent SDK | Secure, sandboxed agents | Claude only | Medium |

| Google ADK | Multimodal + A2A | Gemini-first | Medium |

| Smolagents | Lightweight code agents | None | Easy |

1. LangGraph

Production-ready Model-agnostic Stateful

LangGraph is the heavyweight. Built on top of LangChain, it models your agent's workflow as a directed graph where nodes are actions and edges are transitions. Every step gets checkpointed, so if your agent crashes mid-task, it picks up exactly where it left off.

Why developers pick it

- Built-in persistence: State checkpointing means long-running agents survive failures

- Explicit control flow: You define exactly how your agent moves between steps

- Human-in-the-loop: Native support for approval gates and manual overrides

- Model-agnostic: Swap between OpenAI, Claude, Gemini, or local models

The trade-offs

- Steep learning curve — you need to think in graphs, not scripts

- Verbose for simple tasks (50+ lines for a basic agent)

- LangChain dependency brings complexity even if you only want the graph layer

# LangGraph: define a simple agent as a graph

from langgraph.graph import StateGraph, END

graph = StateGraph(AgentState)

graph.add_node("research", research_node)

graph.add_node("write", write_node)

graph.add_node("review", review_node)

graph.add_edge("research", "write")

graph.add_conditional_edges("review", should_revise,

{"revise": "write", "approve": END})

agent = graph.compile(checkpointer=MemorySaver())Pick LangGraph if: You're building production agents that need state persistence, complex branching logic, or human approval steps. This is the framework most teams choose when "it has to work reliably at scale."

2. CrewAI

Beginner-friendly Role-based YAML config

CrewAI takes a completely different approach: instead of graphs, you define a crew of agents with roles, goals, and backstories. They collaborate on tasks like a team of specialists. It's the most intuitive framework if you're coming from a product or business background.

Why developers pick it

- Lowest barrier to entry: Define agents in YAML, not code

- Role-based metaphor: "Researcher", "Writer", "Editor" — feels natural

- Active community: Large Discord, frequent updates, growing ecosystem

- Built-in delegation: Agents can hand off work to each other automatically

The trade-offs

- Less control over execution flow compared to LangGraph

- Debugging multi-agent interactions can be opaque

- Performance overhead from the role-playing abstraction layer

# CrewAI: define a research crew

from crewai import Agent, Task, Crew

researcher = Agent(

role="AI Research Analyst",

goal="Find the latest AI agent developments",

backstory="Senior tech journalist with 10 years experience"

)

writer = Agent(

role="Technical Writer",

goal="Turn research into clear, actionable content",

backstory="Dev advocate who writes for a 50k newsletter"

)

crew = Crew(agents=[researcher, writer], tasks=[...])

result = crew.kickoff()Pick CrewAI if: You want to prototype multi-agent workflows fast, your agents represent distinct roles, or your team includes non-engineers who need to understand the architecture. Best for content pipelines, research workflows, and business automation.

3. AutoGen (Microsoft)

Multi-agent chat Conversation patterns Mature

AutoGen pioneered the multi-agent conversation pattern. Agents talk to each other in structured dialogues — debates, round-robins, sequential chains. It's the most natural framework for tasks that require consensus or iterative refinement.

Why developers pick it

- Conversation-first: Agents interact through dialogue, not function calls

- Group chat: Multiple agents can debate, vote, and reach consensus

- Code execution: Built-in sandboxed Docker execution for generated code

- Flexible patterns: Sequential, round-robin, or custom conversation flows

The trade-offs

- Microsoft shifted focus to the broader Agent Framework — AutoGen is in maintenance mode

- Conversation-based routing can be unpredictable for deterministic workflows

- Token costs add up fast when agents chat with each other

Note: Microsoft announced the broader "Microsoft Agent Framework" in late 2025. AutoGen still works and has a large user base, but new investment is going into the platform-level framework. Worth watching, but probably not the best bet for greenfield projects in 2026.

Pick AutoGen if: Your agents need to have multi-party conversations — group debates, consensus-building, or iterative code review. The conversation patterns are still the most diverse of any framework.

4. OpenAI Agents SDK

Official OpenAI Batteries included Simple API

The successor to the experimental Swarm project. OpenAI's official take on agent building: minimal boilerplate, built-in tools (code interpreter, web browsing, file handling), and native guardrails. If you're already in the OpenAI ecosystem, this is the path of least resistance.

Why developers pick it

- Zero-config tools: Code interpreter, web browsing, and file search out of the box

- Handoffs: Agents can transfer control to specialized sub-agents cleanly

- Built-in guardrails: Input/output validation without extra libraries

- Tracing: Native observability for debugging agent behavior

The trade-offs

- Locked to OpenAI models — no Claude, no Gemini, no local models

- Less customizable than LangGraph for complex state management

- Relatively new — ecosystem and community still growing

# OpenAI Agents SDK: simple agent with tools

from agents import Agent, Runner

agent = Agent(

name="Research Assistant",

instructions="You help users research AI topics.",

tools=[web_search, code_interpreter],

guardrails=[input_guardrail, output_guardrail]

)

result = Runner.run_sync(agent, "What's new in AI agents?")Pick OpenAI Agents SDK if: You're already using GPT-4o/o3, want the fastest path from zero to working agent, and don't need multi-model support. The built-in tools (especially code interpreter) save hours of integration work.

5. Claude Agent SDK (Anthropic)

Sandboxed execution MCP-native Security-first

Anthropic's framework is built around safety and tool-use. The standout feature is deep integration with the Model Context Protocol (MCP) — which Anthropic designed — giving agents a standardized way to connect to external tools and data sources. The 1M-token context window means agents can reason over entire codebases.

Why developers pick it

- Security-first: Sandboxed code execution with Constitutional AI guardrails

- MCP integration: Connect to any MCP-compatible tool server

- Massive context: 1M tokens — feed it an entire repo and let it work

- Extended thinking: Claude can show its reasoning chain transparently

The trade-offs

- Locked to Claude models

- Smaller ecosystem than LangGraph or CrewAI

- Fewer tutorials and community resources (still early)

Pick Claude Agent SDK if: You're building agents that handle sensitive data, need sandboxed execution, or want MCP-native tool integration. Best-in-class for coding agents and security-sensitive automation.

6. Google Agent Development Kit (ADK)

Multimodal Agent-to-Agent Google Cloud

Google's entry focuses on two differentiators: multimodal agents (text + image + video + audio) and the Agent-to-Agent (A2A) protocol for inter-agent communication across organizational boundaries. If you're building agents that need to process images or video, or agents that talk to other companies' agents, ADK is the framework to watch.

Why developers pick it

- Multimodal-native: Agents that see, hear, and read — not just text

- A2A protocol: Standardized agent-to-agent communication

- Google Cloud integration: Vertex AI, BigQuery, Cloud Functions built in

- Model flexibility: Gemini-first but supports other models

The trade-offs

- Heavily tied to Google Cloud ecosystem

- A2A protocol adoption is still early

- Less battle-tested than LangGraph for production workloads

Pick Google ADK if: You need multimodal agents, agent-to-agent communication, or you're already deep in Google Cloud. The A2A protocol could be huge — but it's a bet on the future, not a sure thing today.

7. Smolagents (Hugging Face)

Lightweight Code-first Open source

The minimalist option. Smolagents takes a code-first approach: instead of generating JSON tool calls, agents write Python code directly. This makes them faster (fewer LLM calls) and more transparent (you can read exactly what the agent is doing). Perfect for developers who want maximum control with minimum abstraction.

Why developers pick it

- Code-based actions: Agent writes Python, not JSON — faster and more flexible

- Minimal abstraction: ~1000 lines of core code, easy to understand and modify

- Model-agnostic: Works with any model via Hugging Face's inference API

- Open source: MIT license, full transparency

The trade-offs

- No built-in state persistence or checkpointing

- Less suited for complex multi-agent orchestration

- You'll build more infrastructure yourself

Pick Smolagents if: You want a lightweight, transparent agent framework you can fully understand and customize. Great for single-agent tasks, research prototypes, and developers who prefer code over configuration.

The Decision Matrix

Here's how to pick your framework based on what you're building:

| If you need... | Use this |

|---|---|

| Production reliability + state management | LangGraph |

| Multi-agent team collaboration | CrewAI |

| Agent-to-agent conversations | AutoGen |

| Fastest path to a working agent (GPT) | OpenAI Agents SDK |

| Security + sandboxed execution | Claude Agent SDK |

| Multimodal or cross-org agents | Google ADK |

| Lightweight, transparent agents | Smolagents |

| You have no idea (start here) | CrewAI or OpenAI |

Our take: There's no single "best" framework. LangGraph leads on production maturity. CrewAI leads on accessibility. The vendor SDKs (OpenAI, Claude, Google) are excellent if you're committed to their ecosystem. The real question isn't "which is best?" — it's "what am I building, and what trade-offs am I willing to make?"

What We Use at Paxrel

Our newsletter pipeline — AI Agents Weekly — uses a custom Python pipeline with direct API calls to DeepSeek V3 and Claude. No framework. Why? Because our workflow is linear (scrape → score → write → publish), and adding a framework would be over-engineering.

That said, if we were building something with branching logic, human approvals, or multi-agent collaboration, we'd reach for LangGraph first. It's the framework we'd trust with a production workload today.

Stay in the Loop

The AI agent ecosystem moves fast. We track every framework update, new tool, and production case study in our free newsletter.

AI Agents Weekly

The top AI agent news, curated 3x/week. Tools, frameworks, launches, and what actually works in production.

Subscribe FreeYou can also grab our free resources:

- Top 10 AI Agent Tools 2026 (14-page PDF guide)

- AI Agent Stack Cheat Sheet (2-page reference card)

Related Articles

- How to Run Autonomous AI Agents with Claude Code

- What Is MCP (Model Context Protocol)?

- How to Build an AI Agent in 2026: Step-by-Step Guide

- What Are AI Agents? The Complete Guide

Not ready to buy? Start with Chapter 1 — free

Get the first chapter of The AI Agent Playbook delivered to your inbox. Learn what AI agents really are and see real production examples.

Get Free Chapter →